Introduction

Guest-Articles/2020/OIT/Introduction

In the Blending chapter, the subject of color blending was introduced. Blending is the way of implementing transparent surfaces in a 3D scene. In short, transparency delves into the subject of drawing semi-solid or fully see-through objects like glasses in computer graphics. The idea is explained up to a suitable point in that chapter, so if you're unfamiliar with the topic, better read Blending first.

In this article, we are scratching the surface of this topic a bit further, since there are so many techniques involved in implementing such an effect in a 3D environment.

To begin with, we are going to discuss about the limitations of the graphics library/hardware and the hardships they entail, and the reason that why transparency is such a tricky subject. Later on, we will introduce and briefly review some of the more well-known transparency techniques that have been invented and used for the past twenty years associated with the current hardware. Ultimately, we are going to focus on explaining and implementing one of them, which will be the subject of the following part of this article.

Note that the goal of this article is to introduce techniques which have significantly better performance than the technique that was used in the Blending chapter. Otherwise, there isn't a genuinely compelling reason to expand on that matter.

Graphics library/hardware limitations

The reason that this article exists, and you're reading it, is that there is no direct way to draw transparent surfaces with the current technology. Many people wish, that it was as simple as turning on a flag in their graphics API, but that's a fairy tale. Whether, this is a limitation of the graphics libraries or video cards, that's debatable.

As explained in the Blending chapter, the source of this problem arises from combining depth testing and color blending. At the fragment stage, there is no buffer like the depth buffer for transparent pixels that would tell the graphics library, which pixels are fully visible or semi-visible. One of the reasons could be, that there is no efficient way of storing the information of transparent pixels in such a buffer that can hold an infinite number of pixels for each coordinate on the screen. Since each transparent pixel could expose its underlying pixels, therefore there needs to be a way to store different layers of all pixels for all screen coordinates.

This limitation leaves us to think for a way to overcome such an issue and since neither the graphics library nor the hardware gives us a hand, this all has to be done by the developer with the tools at hand. We will examine two methods which are prominent in this subject. One being,

Ordered transparency

The most convenient solution to overcome this issue, is to sort your transparent objects, so they're either drawn from the furthest to the nearest, or from the nearest to the furthest in relation to the camera's position. This way, the depth testing wouldn't affect the outcome of those pixels that have been drawn after/before but over/under a further/closer object. However major the expenditure this method entails for the CPU, it was used in many early games that probably most of us have played.

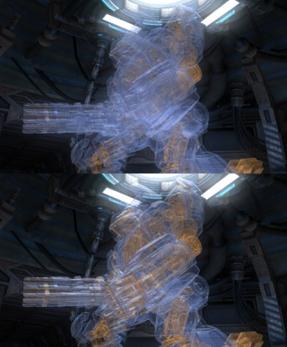

For example, the sample image below shows the importance of blending order. The top part of the image produces an incorrect result with unordered alpha blending, while the bottom correctly sorts the geometry. Note lower visibility of the skeletal structure without correct depth ordering. This image is from ATI Mecha Demo:

So far, we have understood that in order to overcome the limitation of current technology to draw transparent objects, we need order for our transparent objects to be displayed properly on the screen. Ordering takes away performance from your application, and since most of 3D applications are running in real-time, this will be so much more evident as you perform sorting at every frame.

Therefore, we will be looking into the world of order-independent transparency techniques and to find one which better suits our purpose and furthermore our pipeline, so we don't have to sort the objects before drawing.

Order-independent transparency

Order-independent transparency or for short

The goal of OIT techniques is to eliminate the need of sorting transparent objects at draw time. Depending on the technique, some of them must sort fragments for an accurate result, but only at a later stage when all the draw calls have been made, and some of them don't require sorting, but results are approximated.

History

Some of the more advanced techniques that have been invented to overcome the limitation of rendering transparent surfaces, explicitly use a buffer (e.g. a linked list or a 3D array such as [x][y][z]) that can hold multiple layers of pixels' information and can sort pixels on the GPU, normally because of its parallel processing power, as opposed to CPU.

At the same time, there has been hardware capable of facilitating this task by performing on-hardware calculations which is the most convenient way for a developer to have access to transparency out of the box.

Commonly, OIT techniques are separated into two categories which are

Exact OIT

These techniques accurately compute the final color, for which all fragments must be sorted. For high depth complexity scenes, sorting becomes the bottleneck.

One issue with the sorting stage is

Sorting is typically performed in a local array, however performance can be improved further by making use of the GPU's memory hierarchy and sorting in registers, similarly to an external merge sort, especially in conjunction with BMA.

Approximate OIT

Approximate OIT techniques relax the constraint of exact rendering to provide faster results. Higher performance can be gained from not having to store all fragments or only partially sorting the geometry. A number of techniques also compress, or reduce, the fragment data. These include:

- Stochastic Transparency: draw in a higher resolution in full opacity but discard some fragments. Down-sampling will then yield transparency.

- Adaptive Transparency: a two-pass technique where the first constructs a visibility function which compresses on the fly (this compression avoids having to fully sort the fragments) and the second uses this data to composite unordered fragments. Intel's pixel synchronization avoids the need to store all fragments, removing the unbounded memory requirement of many other OIT techniques.

Techniques

Some of the OIT techniques that have been commonly used in the industry are as follows:

- Depth peeling: Introduced in 2001, described a hardware accelerated OIT technique which utilizes the depth buffer to peel a layer of pixels at each pass. With limitations in graphics hardware the scene's geometry had to be rendered many times.

- Dual depth peeling: Introduced in 2008, improves on the performance of depth peeling, still with many-pass rendering limitation.

- Weighted, blended: Published in 2013, utilizes a weighting function and two buffers for pixel color and pixel reveal threshold for the final composition pass. Results in an approximated image with a decent quality in complex scenes.

Implementation

The usual way of performing OIT in 3D applications is to do it in multiple passes. There are at least three passes required for an OIT technique to be performed, so in order to do this, you'll have to have a perfect understanding of how Framebuffers work in OpenGL. Once you're comfortable with Framebuffers, it all boils down to the implementation complexity of the technique you are trying to implement.

Briefly explained, the three passes involved are as follows:

- First pass, is where you draw all of your solid objects, this means any object that does not let the light travel through its geometry.

- Second pass, is where you draw all of your translucent objects. Objects that need alpha discarding, can be rendered in the first pass.

- Third pass, is where you composite the images that resulted from two previous passes and draw that image onto your backbuffer.

This routine is almost identical in implementing OIT techniques across all different pipelines.

In the next part of this article, we are going to implement weighted, blended OIT which is one of the easiest and high performance OIT techniques that has been used in the video game industry for the past ten years.

Further reading

- SEGA Dreamcast Hardware: Dreamcast was one of the few consoles that had hardware implemented order-independent transparency.

- Order-independent transparency: A series of techniques that have a great performance and produce nice results even with the approximated methods.

- Weighted, blended order-independent transparency: One of the easiest OIT techniques in terms of implementation while producing highly acceptable images for complex scenes.

Contact: e-mail